[深度学习] PyTorch 实现双向LSTM 情感分析-程序员宅基地

技术标签: lstm NLP nlp 深度学习 pytorch

一 前言

情感分析(Sentiment Analysis),也称为情感分类,属于自然语言处理(Natural Language Processing,NLP)领域的一个分支任务,随着互联网的发展而兴起。多数情况下该任务分析一个文本所呈现的信息是正面、负面或者中性,也有一些研究会区分得更细,例如在正负极性中再进行分级,区分不同情感强度.

文本情感分析(Sentiment Analysis)是自然语言处理(NLP)方法中常见的应用,也是一个有趣的基本任务,尤其是以提炼文本情绪内容为目的的分类。它是对带有情感色彩的主观性文本进行分析、处理、归纳和推理的过程。

情感分析中的情感极性(倾向)分析。所谓情感极性分析,指的是对文本进行褒义、贬义、中性的判断。在大多应用场景下,只分为两类。例如对于“喜爱”和“厌恶”这两个词,就属于不同的情感倾向。

本文将采用LSTM模型,训练一个能够识别文本postive, negative情感的分类器。

RNN网络因为使用了单词的序列信息,所以准确率要比前向传递神经网络要高。

网络结构:

首先,将单词传入 embedding层,之所以使用嵌入层,是因为单词数量太多,使用嵌入式词向量来表示单词更有效率。在这里我们使用word2vec方式来实现,而且特别神奇的是,我们只需要加入嵌入层即可,网络会自主学习嵌入矩阵

参考下图

通过embedding 层, 新的单词表示传入 LSTM cells。这将是一个递归链接网络,所以单词的序列信息会在网络之间传递。最后, LSTM cells连接一个sigmoid output layer 。 使用sigmoid可以预测该文本是 积极的 还是 消极的 情感。输出层只有一个单元节点(使用sigmoid激活)。

只需要关注最后一个sigmoid的输出,损失只计算最后一步的输出和标签的差异。

文件说明:

(1)reviews.txt 是原始文本文件,共25000条,一行是一篇英文电影影评文本

(2)labels.txt 是标签文件,共25000条,一行是一个标签,positive 或者 negative

二 模型训练与预测

1、Data Preprocessing

建任何模型的第一步,永远是数据清洗。 因为使用embedding 层,需要将单词编码成整数。

我们要去除标点符号。 同时,去除不同文本之间有分隔符号 \n,我们先把\n当成分隔符号,分割所有评论。 然后在将所有评论再次连接成为一个大的文本。

import numpy as np

# read data from text files

with open('./data/reviews.txt', 'r') as f:

reviews = f.read()

with open('./data/labels.txt', 'r') as f:

labels = f.read()

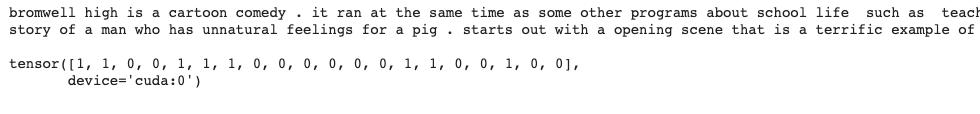

print(reviews[:1000])

print()

print(labels[:20])

from string import punctuation

# get rid of punctuation

reviews = reviews.lower() # lowercase, standardize

all_text = ''.join([c for c in reviews if c not in punctuation])

# split by new lines and spaces

reviews_split = all_text.split('\n')

all_text = ' '.join(reviews_split)

# create a list of words

words = all_text.split()

2、Encoding the words

embedding lookup要求输入的网络数据是整数。最简单的方法就是创建数据字典:{单词:整数}。然后将评论全部一一对应转换成整数,传入网络。

# feel free to use this import

from collections import Counter

## Build a dictionary that maps words to integers

counts = Counter(words)

vocab = sorted(counts, key=counts.get, reverse=True)

vocab_to_int = {word: ii for ii, word in enumerate(vocab, 1)}

## use the dict to tokenize each review in reviews_split

## store the tokenized reviews in reviews_ints

reviews_ints = []

for review in reviews_split:

reviews_ints.append([vocab_to_int[word] for word in review.split()])

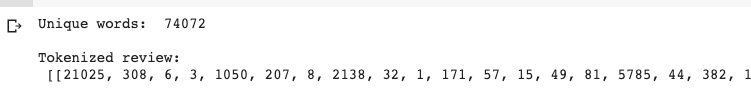

# stats about vocabulary

print('Unique words: ', len((vocab_to_int))) # should ~ 74000+

print()

# print tokens in first review

print('Tokenized review: \n', reviews_ints[:1])

补充enumerate函数用法:

在enumerate函数内写上int整型数字,则以该整型数字作为起始去迭代生成结果。

3、Encoding the labels

将标签 “positive” or "negative"转换为数值。

# 1=positive, 0=negative label conversion

labels_split = labels.split('\n')

encoded_labels = np.array([1 if label == 'positive' else 0 for label in labels_split])

# outlier review stats

review_lens = Counter([len(x) for x in reviews_ints])

print("Zero-length reviews: {}".format(review_lens[0]))

print("Maximum review length: {}".format(max(review_lens)))

消除长度为0的行

print('Number of reviews before removing outliers: ', len(reviews_ints))

## remove any reviews/labels with zero length from the reviews_ints list.

# get indices of any reviews with length 0

non_zero_idx = [ii for ii, review in enumerate(reviews_ints) if len(review) != 0]

# remove 0-length reviews and their labels

reviews_ints = [reviews_ints[ii] for ii in non_zero_idx]

encoded_labels = np.array([encoded_labels[ii] for ii in non_zero_idx])

print('Number of reviews after removing outliers: ', len(reviews_ints))![]()

4、Padding sequences

将所以句子统一长度为200个单词:

1、评论长度小于200的,我们对其左边填充0

2、对于大于200的,我们只截取其前200个单词

#选择每个句子长为200

seq_len = 200

from tensorflow.contrib.keras import preprocessing

features = np.zeros((len(reviews_ints),seq_len),dtype=int)

#将reviews_ints值逐行 赋值给features

features = preprocessing.sequence.pad_sequences(reviews_ints,200)

features.shape或者

def pad_features(reviews_ints, seq_length):

''' Return features of review_ints, where each review is padded with 0's

or truncated to the input seq_length.

'''

# getting the correct rows x cols shape

features = np.zeros((len(reviews_ints), seq_length), dtype=int)

# for each review, I grab that review and

for i, row in enumerate(reviews_ints):

features[i, -len(row):] = np.array(row)[:seq_length]

return features

# Test your implementation!

seq_length = 200

features = pad_features(reviews_ints, seq_length=seq_length)

## test statements - do not change - ##

assert len(features)==len(reviews_ints), "Your features should have as many rows as reviews."

assert len(features[0])==seq_length, "Each feature row should contain seq_length values."

# print first 10 values of the first 30 batches

print(features[:30,:10])

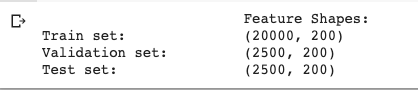

5、Training, Test划分

split_frac = 0.8

## split data into training, validation, and test data (features and labels, x and y)

split_idx = int(len(features)*split_frac)

train_x, remaining_x = features[:split_idx], features[split_idx:]

train_y, remaining_y = encoded_labels[:split_idx], encoded_labels[split_idx:]

test_idx = int(len(remaining_x)*0.5)

val_x, test_x = remaining_x[:test_idx], remaining_x[test_idx:]

val_y, test_y = remaining_y[:test_idx], remaining_y[test_idx:]

## print out the shapes of your resultant feature data

print("\t\t\tFeature Shapes:")

print("Train set: \t\t{}".format(train_x.shape),

"\nValidation set: \t{}".format(val_x.shape),

"\nTest set: \t\t{}".format(test_x.shape))或

from sklearn.model_selection import ShuffleSplit

ss = ShuffleSplit(n_splits=1,test_size=0.2,random_state=0)

for train_index,test_index in ss.split(np.array(reviews_ints)):

train_x = features[train_index]

train_y = labels[train_index]

test_x = features[test_index]

test_y = labels[test_index]

print("\t\t\tFeature Shapes:")

print("Train set: \t\t{}".format(train_x.shape),

"\nTrain_Y set: \t{}".format(train_y.shape),

"\nTest set: \t\t{}".format(test_x.shape))

6. DataLoaders and Batching

import torch

from torch.utils.data import TensorDataset, DataLoader

# create Tensor datasets

train_data = TensorDataset(torch.from_numpy(train_x), torch.from_numpy(train_y))

valid_data = TensorDataset(torch.from_numpy(val_x), torch.from_numpy(val_y))

test_data = TensorDataset(torch.from_numpy(test_x), torch.from_numpy(test_y))

# dataloaders

batch_size = 50

# make sure the SHUFFLE your training data

train_loader = DataLoader(train_data, shuffle=True, batch_size=batch_size)

valid_loader = DataLoader(valid_data, shuffle=True, batch_size=batch_size)

test_loader = DataLoader(test_data, shuffle=True, batch_size=batch_size)

# obtain one batch of training data

dataiter = iter(train_loader)

sample_x, sample_y = dataiter.next()

print('Sample input size: ', sample_x.size()) # batch_size, seq_length

print('Sample input: \n', sample_x)

print()

print('Sample label size: ', sample_y.size()) # batch_size

print('Sample label: \n', sample_y)

7. 双向LSTM模型

1. 判断是否有GPU

# First checking if GPU is available

train_on_gpu=torch.cuda.is_available()

if(train_on_gpu):

print('Training on GPU.')

else:

print('No GPU available, training on CPU.')import torch.nn as nn

class SentimentRNN(nn.Module):

"""

The RNN model that will be used to perform Sentiment analysis.

"""

def __init__(self, vocab_size, output_size, embedding_dim, hidden_dim, n_layers, bidirectional=True, drop_prob=0.5):

"""

Initialize the model by setting up the layers.

"""

super(SentimentRNN, self).__init__()

self.output_size = output_size

self.n_layers = n_layers

self.hidden_dim = hidden_dim

self.bidirectional = bidirectional

# embedding and LSTM layers

self.embedding = nn.Embedding(vocab_size, embedding_dim)

self.lstm = nn.LSTM(embedding_dim, hidden_dim, n_layers,

dropout=drop_prob, batch_first=True,

bidirectional=bidirectional)

# dropout layer

self.dropout = nn.Dropout(0.3)

# linear and sigmoid layers

if bidirectional:

self.fc = nn.Linear(hidden_dim*2, output_size)

else:

self.fc = nn.Linear(hidden_dim, output_size)

self.sig = nn.Sigmoid()

def forward(self, x, hidden):

"""

Perform a forward pass of our model on some input and hidden state.

"""

batch_size = x.size(0)

# embeddings and lstm_out

x = x.long()

embeds = self.embedding(x)

lstm_out, hidden = self.lstm(embeds, hidden)

# if bidirectional:

# lstm_out = lstm_out.contiguous().view(-1, self.hidden_dim*2)

# else:

# lstm_out = lstm_out.contiguous().view(-1, self.hidden_dim)

# dropout and fully-connected layer

out = self.dropout(lstm_out)

out = self.fc(out)

# sigmoid function

sig_out = self.sig(out)

# reshape to be batch_size first

sig_out = sig_out.view(batch_size, -1)

sig_out = sig_out[:, -1] # get last batch of labels

# return last sigmoid output and hidden state

return sig_out, hidden

def init_hidden(self, batch_size):

''' Initializes hidden state '''

# Create two new tensors with sizes n_layers x batch_size x hidden_dim,

# initialized to zero, for hidden state and cell state of LSTM

weight = next(self.parameters()).data

number = 1

if self.bidirectional:

number = 2

if (train_on_gpu):

hidden = (weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_().cuda(),

weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_().cuda()

)

else:

hidden = (weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_(),

weight.new(self.n_layers*number, batch_size, self.hidden_dim).zero_()

)

return hidden是否使用双向LSTM(在测试集上效果更好一些)

# Instantiate the model w/ hyperparams

vocab_size = len(vocab_to_int)+1 # +1 for the 0 padding + our word tokens

output_size = 1

embedding_dim = 400

hidden_dim = 256

n_layers = 2

bidirectional = False #这里为True,为双向LSTM

net = SentimentRNN(vocab_size, output_size, embedding_dim, hidden_dim, n_layers, bidirectional)

print(net)

8 Train

# loss and optimization functions

lr=0.001

criterion = nn.BCELoss()

optimizer = torch.optim.Adam(net.parameters(), lr=lr)

# training params

epochs = 4 # 3-4 is approx where I noticed the validation loss stop decreasing

print_every = 100

clip=5 # gradient clipping

# move model to GPU, if available

if(train_on_gpu):

net.cuda()

net.train()

# train for some number of epochs

for e in range(epochs):

# initialize hidden state

h = net.init_hidden(batch_size)

counter = 0

# batch loop

for inputs, labels in train_loader:

counter += 1

if(train_on_gpu):

inputs, labels = inputs.cuda(), labels.cuda()

# Creating new variables for the hidden state, otherwise

# we'd backprop through the entire training history

h = tuple([each.data for each in h])

# zero accumulated gradients

net.zero_grad()

# get the output from the model

output, h = net(inputs, h)

# calculate the loss and perform backprop

loss = criterion(output.squeeze(), labels.float())

loss.backward()

# `clip_grad_norm` helps prevent the exploding gradient problem in RNNs / LSTMs.

nn.utils.clip_grad_norm_(net.parameters(), clip)

optimizer.step()

# loss stats

if counter % print_every == 0:

# Get validation loss

val_h = net.init_hidden(batch_size)

val_losses = []

net.eval()

for inputs, labels in valid_loader:

# Creating new variables for the hidden state, otherwise

# we'd backprop through the entire training history

val_h = tuple([each.data for each in val_h])

if(train_on_gpu):

inputs, labels = inputs.cuda(), labels.cuda()

output, val_h = net(inputs, val_h)

val_loss = criterion(output.squeeze(), labels.float())

val_losses.append(val_loss.item())

net.train()

print("Epoch: {}/{}...".format(e+1, epochs),

"Step: {}...".format(counter),

"Loss: {:.6f}...".format(loss.item()),

"Val Loss: {:.6f}".format(np.mean(val_losses)))

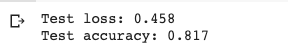

9 Test

# Get test data loss and accuracy

test_losses = [] # track loss

num_correct = 0

# init hidden state

h = net.init_hidden(batch_size)

net.eval()

# iterate over test data

for inputs, labels in test_loader:

# Creating new variables for the hidden state, otherwise

# we'd backprop through the entire training history

h = tuple([each.data for each in h])

if(train_on_gpu):

inputs, labels = inputs.cuda(), labels.cuda()

# get predicted outputs

output, h = net(inputs, h)

# calculate loss

test_loss = criterion(output.squeeze(), labels.float())

test_losses.append(test_loss.item())

# convert output probabilities to predicted class (0 or 1)

pred = torch.round(output.squeeze()) # rounds to the nearest integer

# compare predictions to true label

correct_tensor = pred.eq(labels.float().view_as(pred))

correct = np.squeeze(correct_tensor.numpy()) if not train_on_gpu else np.squeeze(correct_tensor.cpu().numpy())

num_correct += np.sum(correct)

# -- stats! -- ##

# avg test loss

print("Test loss: {:.3f}".format(np.mean(test_losses)))

# accuracy over all test data

test_acc = num_correct/len(test_loader.dataset)

print("Test accuracy: {:.3f}".format(test_acc))

三. 模型Inference

# negative test review

test_review_neg = 'The worst movie I have seen; acting was terrible and I want my money back. This movie had bad acting and the dialogue was slow.'

from string import punctuation

def tokenize_review(test_review):

test_review = test_review.lower() # lowercase

# get rid of punctuation

test_text = ''.join([c for c in test_review if c not in punctuation])

# splitting by spaces

test_words = test_text.split()

# tokens

test_ints = []

test_ints.append([vocab_to_int[word] for word in test_words])

return test_ints

# test code and generate tokenized review

test_ints = tokenize_review(test_review_neg)

print(test_ints)

# test sequence padding

seq_length=200

features = pad_features(test_ints, seq_length)

print(features)

# test conversion to tensor and pass into your model

feature_tensor = torch.from_numpy(features)

print(feature_tensor.size())![]()

![]()

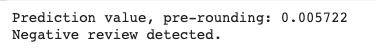

def predict(net, test_review, sequence_length=200):

net.eval()

# tokenize review

test_ints = tokenize_review(test_review)

# pad tokenized sequence

seq_length=sequence_length

features = pad_features(test_ints, seq_length)

# convert to tensor to pass into your model

feature_tensor = torch.from_numpy(features)

batch_size = feature_tensor.size(0)

# initialize hidden state

h = net.init_hidden(batch_size)

if(train_on_gpu):

feature_tensor = feature_tensor.cuda()

# get the output from the model

output, h = net(feature_tensor, h)

# convert output probabilities to predicted class (0 or 1)

pred = torch.round(output.squeeze())

# printing output value, before rounding

print('Prediction value, pre-rounding: {:.6f}'.format(output.item()))

# print custom response

if(pred.item()==1):

print("Positive review detected!")

else:

print("Negative review detected.")

# positive test review

test_review_pos = 'This movie had the best acting and the dialogue was so good. I loved it.'

# call function

seq_length=200 # good to use the length that was trained on

predict(net, test_review_neg, seq_length)

智能推荐

oracle 12c 集群安装后的检查_12c查看crs状态-程序员宅基地

文章浏览阅读1.6k次。安装配置gi、安装数据库软件、dbca建库见下:http://blog.csdn.net/kadwf123/article/details/784299611、检查集群节点及状态:[root@rac2 ~]# olsnodes -srac1 Activerac2 Activerac3 Activerac4 Active[root@rac2 ~]_12c查看crs状态

解决jupyter notebook无法找到虚拟环境的问题_jupyter没有pytorch环境-程序员宅基地

文章浏览阅读1.3w次,点赞45次,收藏99次。我个人用的是anaconda3的一个python集成环境,自带jupyter notebook,但在我打开jupyter notebook界面后,却找不到对应的虚拟环境,原来是jupyter notebook只是通用于下载anaconda时自带的环境,其他环境要想使用必须手动下载一些库:1.首先进入到自己创建的虚拟环境(pytorch是虚拟环境的名字)activate pytorch2.在该环境下下载这个库conda install ipykernelconda install nb__jupyter没有pytorch环境

国内安装scoop的保姆教程_scoop-cn-程序员宅基地

文章浏览阅读5.2k次,点赞19次,收藏28次。选择scoop纯属意外,也是无奈,因为电脑用户被锁了管理员权限,所有exe安装程序都无法安装,只可以用绿色软件,最后被我发现scoop,省去了到处下载XXX绿色版的烦恼,当然scoop里需要管理员权限的软件也跟我无缘了(譬如everything)。推荐添加dorado这个bucket镜像,里面很多中文软件,但是部分国外的软件下载地址在github,可能无法下载。以上两个是官方bucket的国内镜像,所有软件建议优先从这里下载。上面可以看到很多bucket以及软件数。如果官网登陆不了可以试一下以下方式。_scoop-cn

Element ui colorpicker在Vue中的使用_vue el-color-picker-程序员宅基地

文章浏览阅读4.5k次,点赞2次,收藏3次。首先要有一个color-picker组件 <el-color-picker v-model="headcolor"></el-color-picker>在data里面data() { return {headcolor: ’ #278add ’ //这里可以选择一个默认的颜色} }然后在你想要改变颜色的地方用v-bind绑定就好了,例如:这里的:sty..._vue el-color-picker

迅为iTOP-4412精英版之烧写内核移植后的镜像_exynos 4412 刷机-程序员宅基地

文章浏览阅读640次。基于芯片日益增长的问题,所以内核开发者们引入了新的方法,就是在内核中只保留函数,而数据则不包含,由用户(应用程序员)自己把数据按照规定的格式编写,并放在约定的地方,为了不占用过多的内存,还要求数据以根精简的方式编写。boot启动时,传参给内核,告诉内核设备树文件和kernel的位置,内核启动时根据地址去找到设备树文件,再利用专用的编译器去反编译dtb文件,将dtb还原成数据结构,以供驱动的函数去调用。firmware是三星的一个固件的设备信息,因为找不到固件,所以内核启动不成功。_exynos 4412 刷机

Linux系统配置jdk_linux配置jdk-程序员宅基地

文章浏览阅读2w次,点赞24次,收藏42次。Linux系统配置jdkLinux学习教程,Linux入门教程(超详细)_linux配置jdk

随便推点

matlab(4):特殊符号的输入_matlab微米怎么输入-程序员宅基地

文章浏览阅读3.3k次,点赞5次,收藏19次。xlabel('\delta');ylabel('AUC');具体符号的对照表参照下图:_matlab微米怎么输入

C语言程序设计-文件(打开与关闭、顺序、二进制读写)-程序员宅基地

文章浏览阅读119次。顺序读写指的是按照文件中数据的顺序进行读取或写入。对于文本文件,可以使用fgets、fputs、fscanf、fprintf等函数进行顺序读写。在C语言中,对文件的操作通常涉及文件的打开、读写以及关闭。文件的打开使用fopen函数,而关闭则使用fclose函数。在C语言中,可以使用fread和fwrite函数进行二进制读写。 Biaoge 于2024-03-09 23:51发布 阅读量:7 ️文章类型:【 C语言程序设计 】在C语言中,用于打开文件的函数是____,用于关闭文件的函数是____。

Touchdesigner自学笔记之三_touchdesigner怎么让一个模型跟着鼠标移动-程序员宅基地

文章浏览阅读3.4k次,点赞2次,收藏13次。跟随鼠标移动的粒子以grid(SOP)为partical(SOP)的资源模板,调整后连接【Geo组合+point spirit(MAT)】,在连接【feedback组合】适当调整。影响粒子动态的节点【metaball(SOP)+force(SOP)】添加mouse in(CHOP)鼠标位置到metaball的坐标,实现鼠标影响。..._touchdesigner怎么让一个模型跟着鼠标移动

【附源码】基于java的校园停车场管理系统的设计与实现61m0e9计算机毕设SSM_基于java技术的停车场管理系统实现与设计-程序员宅基地

文章浏览阅读178次。项目运行环境配置:Jdk1.8 + Tomcat7.0 + Mysql + HBuilderX(Webstorm也行)+ Eclispe(IntelliJ IDEA,Eclispe,MyEclispe,Sts都支持)。项目技术:Springboot + mybatis + Maven +mysql5.7或8.0+html+css+js等等组成,B/S模式 + Maven管理等等。环境需要1.运行环境:最好是java jdk 1.8,我们在这个平台上运行的。其他版本理论上也可以。_基于java技术的停车场管理系统实现与设计

Android系统播放器MediaPlayer源码分析_android多媒体播放源码分析 时序图-程序员宅基地

文章浏览阅读3.5k次。前言对于MediaPlayer播放器的源码分析内容相对来说比较多,会从Java-&amp;gt;Jni-&amp;gt;C/C++慢慢分析,后面会慢慢更新。另外,博客只作为自己学习记录的一种方式,对于其他的不过多的评论。MediaPlayerDemopublic class MainActivity extends AppCompatActivity implements SurfaceHolder.Cal..._android多媒体播放源码分析 时序图

java 数据结构与算法 ——快速排序法-程序员宅基地

文章浏览阅读2.4k次,点赞41次,收藏13次。java 数据结构与算法 ——快速排序法_快速排序法